Too much dependency on Voice Assistants can be dangerous as they are susceptible to hacking, says an international team of researchers. Their study says that Voice assistants such as Amazon’s Alexa, Apple’s Siri and Google Assistant can be hacked by shining a laser on the devices’ microphones.

The researchers have reportedly been able to take control of the smart speakers and voice assistant enabled devices with the help of laser lights shone at their microphones. The researchers have experimented with smart speakers like Alexa, Google Assistant, and Apple Siri. They said they were able to take over the voice assistants by hitting the microphones of the devices with the beam of light. The experiments were conducted by the team of experts based in the University of Michigan and Tokyo.

Laser Light can control voice Assistants and Smart Speakers

There have been many issues and controversies revolving around the privacy concerns with the smart devices. Reportedly the companies like Apple and Amazon employed contractors to listen over the conversations of the users with the smart assistants. The latest and unexpected glitch that the researchers have discovered is making waves in the news markets. The devices are susceptible to lasers.

Research Paper says researchers Tricked Google Home Device

Want to publish your own articles on DistilINFO Publications?

Send us an email, we will get in touch with you.

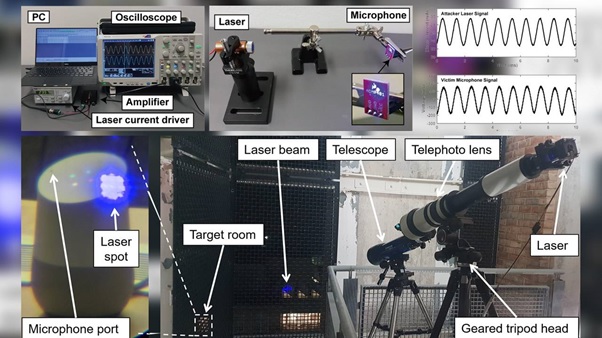

The team published a paper on Monday that said that the researchers tricked Google Home device to open a garage door from a distance of 230 Feet. They focused the lasers on the device with the telephoto lens and took control of the gadgets. The lasers tricked the microphones into making electrical signals giving the impression that the micro-phones are receiving voice commands. The researchers say that hackers can use the method to trick the devices and command the machines to do several things. They can make an online purchase, unlock, and drive cars remotely and also control smart home switches connected to the smart speaker.

Microphones of Smart Speakers can be manipulated by Light Signals aimed directly at them

As per the report, the device’s microphones use the voice and sound commands to take action by converting them into electrical signals. The light commands can also make the receivers react when aimed directly at them, just like voice instructions.

Google, Apple and Amazon Taking Note of the Glitch

The research paper findings have been shared by the potential users of voice technology like Google, Apple, Tesla, and Amazon. The research team shares a possible security threat with all the major market players.

“We are closely reviewing this research paper. Protecting our users is paramount, and we’re always looking at ways to improve the security of our devices,” a Google spokesperson said in an emailed statement.

An interesting point to note here is that a laser can control not just smart speakers but mobile phones, tablets, and any devices that can work with smart assistants using voice commands.